Facebook admitted that its so-called “fact-checking” program is actually cranking out opinions used to censor certain viewpoints.

In its latest legal battle with TV journalist John Stossel over a post about the origins of the deadly 2020 California forest fires, Facebook, now rebranded and referred to as “Meta,” claims that its “fact-checking” program should not be the target of a defamation suit because its attempts to regulate content are done by third-party organizations who are entitled to their “opinion.”

Stossel’s original complaint questioned whether “Facebook and its vendors defame a user who posts factually accurate content, when they publicly announce that the content failed a ‘fact-check’ and is ‘partly false,’ and by attributing to the user a false claim that he never made?” Facebook, however, claimed that the counter article authored by Climate Feedback is not necessarily the tech giant’s responsibility.

Facebook went on to complain that Stossel’s problem isn’t with the Silicon Valley giants’ “labels” on his content but with the obscure organizations that Facebook employs to do its “fact-checking” dirty work.

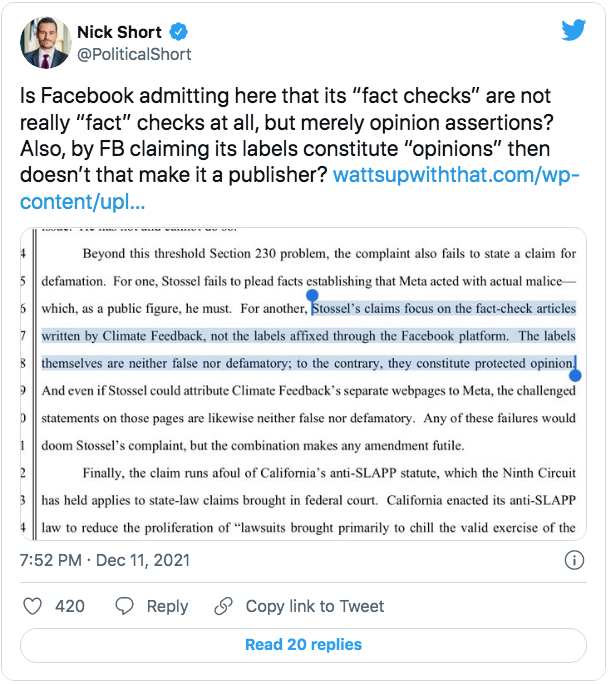

“The labels themselves are neither false nor defamatory; to the contrary, they constitute protected opinion,” Facebook admitted. “And even if Stossel could attribute Climate Feedback’s separate webpages to Meta, the challenged statements on those pages are likewise neither false nor defamatory. Any of these failures would doom Stossel’s complaint, but the combination makes any amendment futile.

It’s no secret that Facebook uses its “fact-checking” program to curb information that it wants to be censored, and this November lawsuit gives more insight into the Big Tech company’s methods and twisted rationale.

“The independence of the fact checkers is a deliberate feature of Meta’s fact-checking program, designed to ensure that Meta does not become the arbiter of truth on its platforms,” the lawsuit stated before admitting that “Meta identifies potential misinformation for fact-checkers to review and rate. … [I]t leaves the ultimate determination whether information is false or misleading to the fact-checkers. And though Meta has designed its platforms so that fact-checker ratings appear next to content that the fact-checkers have reviewed and rated, it does not contribute to the substance of those ratings.”